AI Agent Security Best Practices for Scalable Agentic Workflows

Enterprises are deploying AI agents faster than they are securing them. Agents now execute transactions, query databases, modify files, and interact with external systems, frequently without a human reviewing each action.

That deployment speed has a cost. According to Gartner, over 40% of agentic AI projects will be canceled by the end of 2027, with inadequate risk controls cited as one of the primary causes.

The controls most organizations rely on were built for systems that respond to human instruction. AI agents do not operate that way, and a manipulated agent does not pause before executing bad instructions.

In this blog, we will explore why AI agent security demands a different approach, the threats targeting autonomous systems, and the step-by-step practices every enterprise must implement.

Why AI Agent Security Is Different

Traditional cybersecurity was designed around human-operated systems, firewalls, endpoint protection, and access logs, all of which assume a person initiates each action. AI agents remove that assumption entirely.

Three characteristics make them a distinct AI security problem:

- Broad Access by Design

Agents connect to APIs, databases, cloud services, and external tools, each a potential entry point

- Persistent Memory

Agents retain context across sessions, giving attackers a window to manipulate stored state

- Autonomous Execution

A compromised agent can exfiltrate data, delete records, or trigger downstream workflows before anyone intervenes

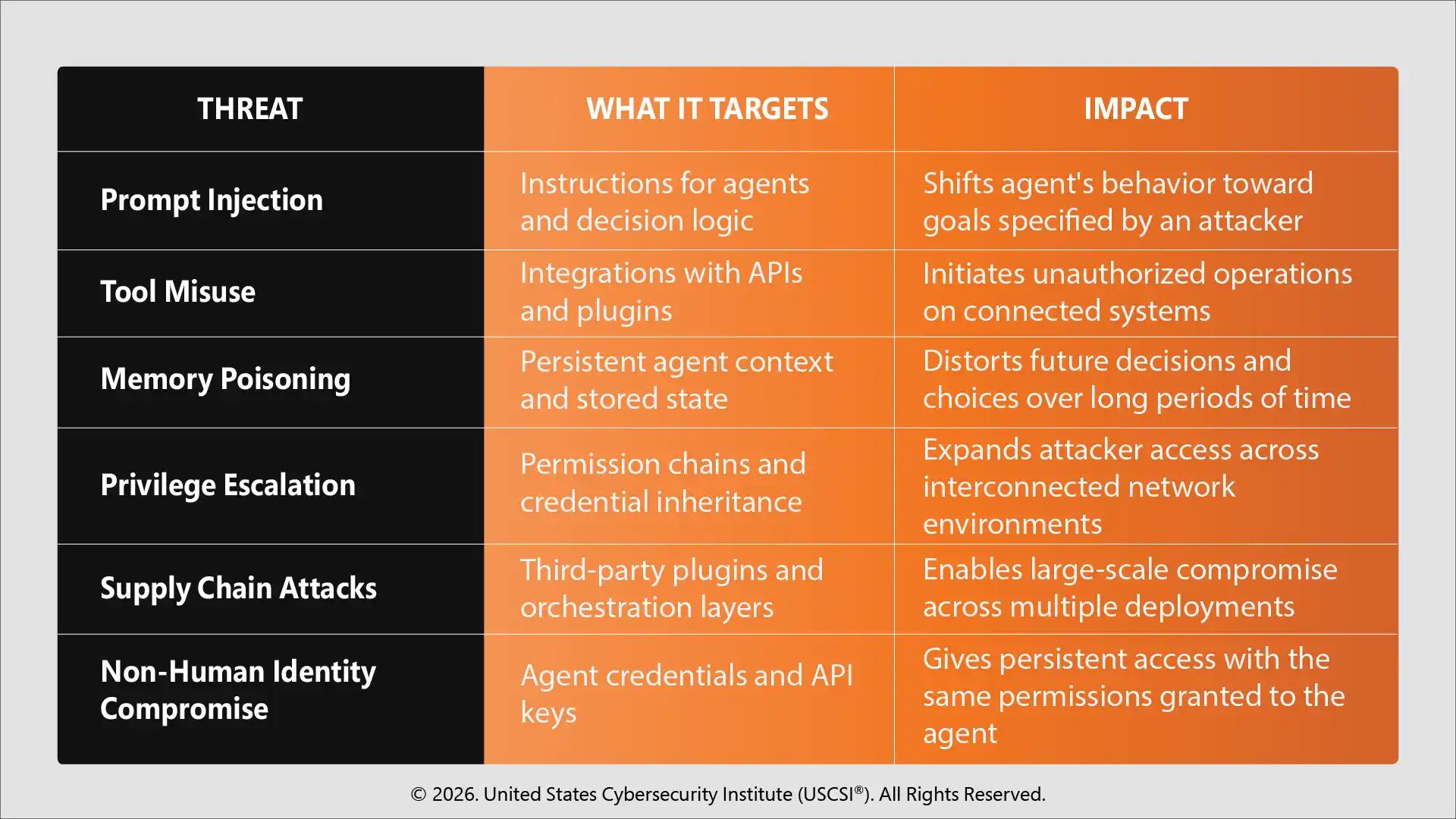

The Threat Landscape: What Attackers Target

Before building controls, security teams must understand what is being targeted. The attack surface for AI agents spans the instruction, memory, tooling, and identity layers, none of which traditional tools were built to cover.

Each threat vector below represents a distinct entry point requiring its own defensive response.

AI Agent Security Best Practices Step-by-Step

Securing an AI agent is not a single action; it is a sequence of deliberate decisions made across architecture, access, identity, and operations. Each step below addresses a distinct layer of AI agent security risks.

Step 1

Conduct a Risk Assessment Before Any Agent Goes Live

Map every system, data source, and API the agent will access. Identify permission requirements, flag high-consequence action paths, and document what a compromise would expose. USCSI®'s Cyber Risk Assessment: The Complete Guide for Security Leaders provides a structured framework purpose-built for this stage.

Step 2

Enforce Least-Privilege Access and Just-in-Time Permissions

Every agent should access only what its specific task requires. JIT permissions are granted for the duration of a task and revoked immediately after to limit the damage window when an agent is manipulated or drifts outside its intended scope.

Step 3

Apply Zero-Trust Architecture at the Infrastructure Level

Every agent action must be authenticated as a new request regardless of prior behavior. Authorization gates must sit at the infrastructure layer; enforcing them only at the prompt level is already too late.

Step 4

Assign Agent-Specific Credentials

Each agent must operate under its own explicitly defined identity. Credentials shared with human users or inherited across agents are difficult to audit and nearly impossible to revoke cleanly when a compromise occurs.

Step 5

Sandbox Untrusted or Newly Deployed Agents

Agents that have not yet established a behavioral baseline should operate in a firewalled environment with restricted system access. Sandboxing limits the blast radius of a compromise during that period; it is not a permanent state but a necessary one before full production access is granted.

Step 6

Validate Inputs, Encrypt Data, and Monitor Outputs at Every Boundary

Input validation prevents malicious prompts from getting to agent logic. Sensitive data is kept out of the wrong hands through data encryption at rest and in API traffic.

Output monitoring ensures agents cannot surface hidden instructions or sensitive content downstream. All three work as a unit; removing any one of them leaves the others incomplete.

Step 7

Require Human Approval for High-Consequence Actions

Data deletion, financial transactions, and security configuration changes must require explicit human sign-off before execution. This classification should be dynamic, the risk level of any action shifts depending on the systems the agent is connected to and the context of the current task.

Step 8

Monitor Agent Behavior Continuously at Runtime

Static controls applied at deployment are not enough for systems that evolve as they operate. Runtime threat detection must run continuously against established baselines, surfacing manipulation or drift before it compounds.

Full visibility into every tool call, memory state change, and decision point is non-negotiable across all multi-agent architectures, not just inputs and outputs. For teams managing this at scale, USCSI®'s analysis of the top AI SOC tools for 2026 covers the platforms purpose-built for agentic detection and response.

Build the Skills AI Agent Security Demands

Securing AI agents at the enterprise level requires more than technical controls, it demands strategic thinking across vulnerability management, access governance, and threat detection.

Professionals who want to lead this work need the depth to design, govern, and defend agentic systems at scale. The USCSI® cybersecurity certification Certified Senior Cybersecurity Specialist (CSCS™) covers exactly that, from threat detection and access governance to infrastructure security and compliance leadership. As AI agent deployments grow more complex, certified professionals are the ones organizations turn to when security cannot afford to be reactive.

FAQs

What are the unique compliance standards for AI agent security in 2026?

The NIST AI Risk Management Framework and OWASP LLM Top 10 are the most immediate places to begin enterprise AI agent security compliance.

Which cybersecurity skills will be important for professionals securing AI agents in 2026?

The three most sought-after skills for professionals are access governance, threat modeling for autonomous systems, and behavioral monitoring.

Which roles are most critical to enterprise organizations' AI agent security?

AI agent security strategy and implementation are most directly the responsibility of security architects, cloud security engineers, and CISOs.