OpenAI's GPT-5.4-Cyber: A Turning Point in the AI-Powered Cybersecurity Landscape

Artificial intelligence is reshaping nearly every industry, but few sectors feel the impact as sharply as cybersecurity. Attacks are more sophisticated, threat actors are better resourced, and the gap between offense and defense keeps widening. In April 2026, OpenAI took a direct step into that gap with GPT-5.4-Cyber, a specialized cybersecurity model designed to support defenders, researchers, and enterprises operating at the front line of digital security.

In this blog, we discuss what GPT-5.4-Cyber is, why its release matters, the arms race it has intensified, the risks it introduces, and what it all means for the cybersecurity landscape in 2026.

What Exactly Is GPT-5.4-Cyber?

GPT-5.4-Cyber is a cyber-focused variant of OpenAI's GPT-5.4 architecture, optimized specifically for security operations and advanced technical workflows. Unlike general-purpose AI models, it has been fine-tuned to handle queries that standard models block, reducing refusal rates on legitimate dual-use security tasks.

Key capabilities it brings to vetted users

- Vulnerability analysis and discovery

- Malware detection and investigation

- Binary reverse engineering for analyzing compiled software without source code access

- Threat modelling and attack pattern analysis

- Secure code review and patch validation

- Incident response support

- Agentic execution across multi-step security workflows

OpenAI distributes the model through its Trusted Access for Cyber (TAC) program, identity-verified access only. Current participants include CrowdStrike, Cisco, Cloudflare, Palo Alto Networks, Oracle, and NVIDIA.

Why Does This Release Matter?

Until recently, most AI security tools handled repetitive, rule-based work like log analysis, anomaly detection, and alert triage. GPT-5.4-Cyber moves well beyond that. It introduces advanced reasoning and contextual understanding into cyber operations, giving security teams the ability to

- Understand complex, multi-stage attack patterns.

- Explain malware behaviour in plain language.

- Generate defensive strategies based on the real cybersecurity threats context.

- Prioritize risk across complex environments.

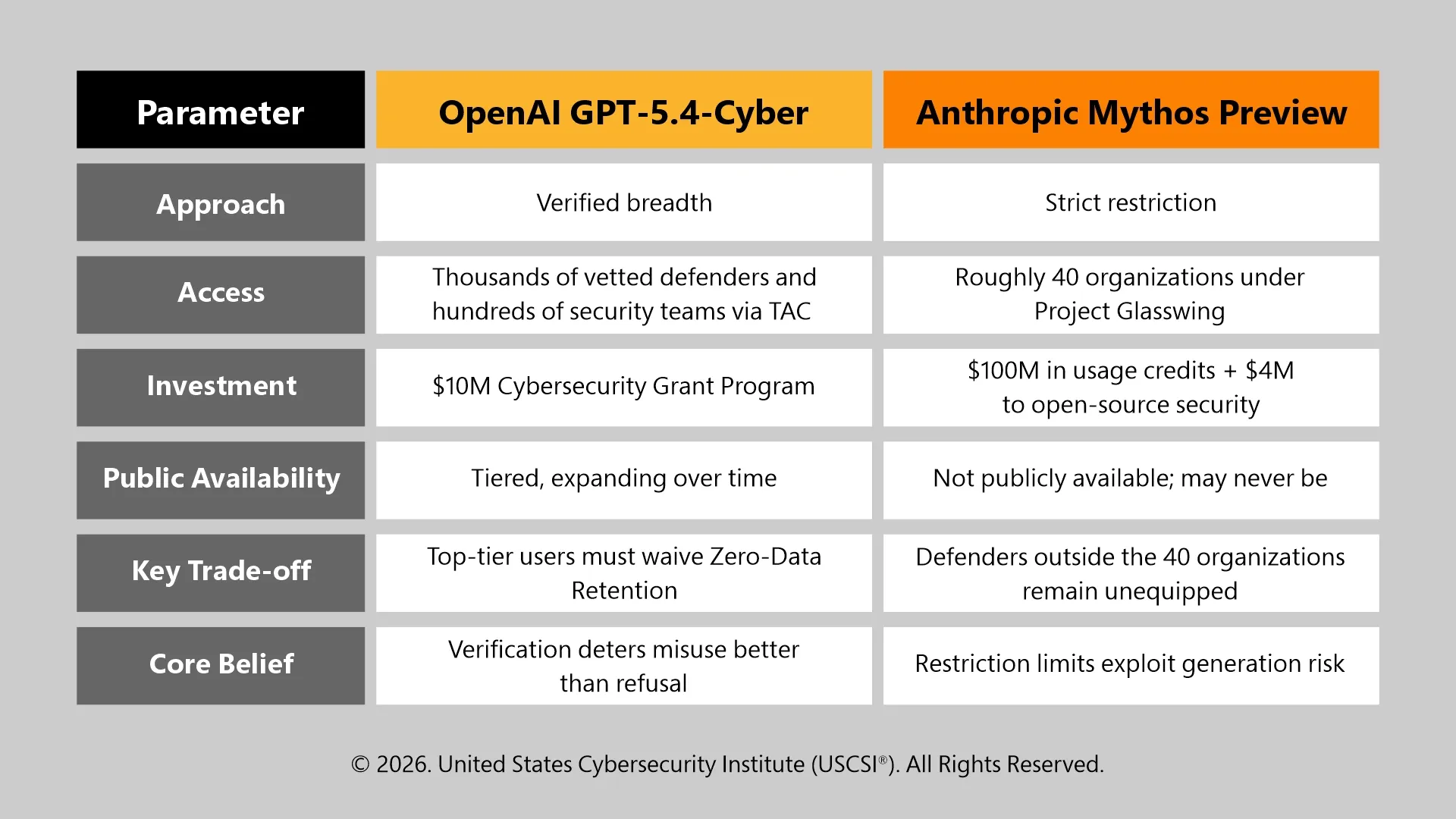

Are OpenAI and Anthropic Taking Different Bets on the Same Problem?

Yes, both companies are working with the same reality that frontier AI models can find and exploit vulnerabilities. What they disagree on is who should have access to that capability, and under what conditions.

Why Is Dual-Use Risk the Central Problem Here?

Every capability that makes GPT-5.4-Cyber effective for defense also makes it useful for offense. A model that can reverse-engineer binaries to find vulnerabilities for patching can, in the wrong hands, find the same vulnerabilities for exploitation.

OpenAI has been direct: "Cyber capabilities are inherently dual use, risk is not defined by the model alone."

The numbers reflect how serious the threat landscape already is

- AI-generated phishing emails now achieve 54% click-through rates, compared to 12% for manually crafted messages (State of AI Cybersecurity 2026).

- IBM X-Force recorded a 44% increase in attacks originating from exploitation of public-facing applications, partly driven by AI-assisted vulnerability discovery.

- 77% of organizations now use generative AI in their security stack, yet Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to inadequate risk controls.

Attackers face none of the access restrictions that defenders do. They can use any model, from any provider, with no verification requirements. That asymmetry is the defining challenge of cybersecurity in 2026, and it sharpens with every capability advance.

What Does Regulation Add to This Picture?

The EU AI Act adds a layer neither company has fully addressed. Its most substantive obligations take effect on 2 August 2026, and high-risk AI systems, a category likely to include security automation tools, will need to demonstrate compliance with requirements around risk management, data governance, transparency, and human oversight. How tiered-access cybersecurity models fit within that framework remains an open question.

As per the USAII® insight OpenAI Swaps ChatGPT's Core Model: A Closer Look at GPT-5.5 Instant, GPT-5.5 Instant became the first Instant-tier model designated as "High Capability" in both cybersecurity and biological domains, a classification previously reserved for Thinking and Pro variants. That reclassification signals where the capability floor now sits, and regulatory frameworks are still catching up to it.

What Does This Mean for Security Teams Operating Right Now?

OpenAI's Codex Security has already contributed to the identification and remediation of over 3,000 critical and high-severity vulnerabilities across open-source infrastructure. The speed at which these tools detect flaws is outpacing what manual review processes alone can match.

But deployment speed is outpacing security governance. As per the USCSI® insight AI Agent Security Best Practices for Scalable Agentic Workflows, enterprises are deploying AI agents faster than they are securing them, with agents now executing transactions, querying databases, and modifying files frequently without human review.

Autonomous systems demand a fundamentally different approach: persistent memory risks, broad API access exposure, and continuous behavioral monitoring at runtime, not just inputs and outputs.

Security teams that want to operate responsibly in this environment need structured, verified cybersecurity skills across threat modeling for autonomous systems, access governance, and AI-era compliance. USCSI® cybersecurity certifications are built specifically for cybersecurity professionals working at that intersection.

The Future of AI-Driven Cyber Defense

The near-term trajectory is already taking shape. Expect to see AI-assisted SOC automation, autonomous vulnerability management, AI-driven penetration testing, and real-time threat intelligence agents operating at machine speed.

GPT-5.4-Cyber signals that cybersecurity is becoming one of the most strategically important applications of artificial intelligence. It also signals that the skills, governance frameworks, and verified expertise required to deploy it responsibly are not optional. Organizations that integrate AI effectively into security operations stand to gain a significant defensive advantage.

At the same time, the risks of misuse, adversarial exploitation of AI systems themselves, and AI-powered cybercrime will continue to rise in parallel. The battle ahead is increasingly AI versus AI, and preparedness on the defensive side starts now.

FAQs

Is GPT-5.4-Cyber available to individual security researchers or only to organizations? Individual researchers can apply through OpenAI's TAC program after completing identity verification and enabling phishing-resistant advanced account security.

Can GPT-5.4-Cyber be integrated directly into existing enterprise security tools and SIEM platforms?

Access is currently via API within verified TAC tiers; native SIEM integrations have not been specified by OpenAI.

Does GPT-5.4-Cyber replace the need for traditional penetration testing teams?

No, it accelerates specific workflows like vulnerability discovery but still requires human judgment for scope definition, risk prioritization, and remediation decisions.