Understanding Deepfake Technology Attacks: Detection and Prevention Strategies

Deepfake attacks have quickly become one of the most dangerous cybersecurity risks in the age of AI (artificial intelligence). It uses highly realistic but fake media such as audio, video, or images to manipulate facts, trick individuals, or carry out sophisticated frauds.

Thales report 2026 highlights that 48% of organizations identify AI-powered attacks as a major threat, while 59% have encountered deepfake incidents, and 48% report reputational damage due to AI-generated misinformation.

The rise of digital media and the growing reliance of businesses on digital communication have tremendously increased the number of deepfake attacks, facilitating cybercriminals to target a large number of individuals in no time. This is why cybersecurity education is highly important in today’s digital world.

Let us understand in detail what deepfake attacks are, how they work, and how you can safeguard against them.

What are Deepfake Attacks?

Deepfake attacks use AI-generated media that can impersonate a real person’s voice, mannerisms, and looks to trick people into performing certain actions (like doing a transaction or revealing confidential information) or manipulate them.

It uses advanced machine learning techniques to create fake media that looks and sounds highly realistic. However, there is some good news. Advanced deepfake AI detection tools using AI can detect 97% of deepfake faces automatically, as mentioned by a University of Florida study.

The term ‘deepfake’ comes from deep learning. It is a subset of AI that trains models on huge amounts of data to create real-like human features such as facial expressions, voice, and behavior. Social engineering attacks primarily use deep-fake media to exploit human trust, not technical vulnerabilities.

How Deepfake Technology Works?

Deepfake technology uses Generative Adversarial Networks (GANs). It is a type of AI model that consists of two important components:

- Generator – that creates fake media

- Discriminator – it checks if the media looks real or not

Both of them work together continuously and learn from each other, which, over time, improves the originality of the generated content.

Deepfake creation follows these steps:

- Collect images, videos, and audio samples of the target

- Train AI models to mimic original voice, facial expressions, and movements

- Generate fake media using these features.

These fake media can then be used for various purposes, not mandatorily to exploit, but to educate as well.

What are the Various Types of Deepfake Cyberattacks?

Cybercriminals use various techniques to create deepfake cybersecurity risks. Some common types include:

- Audio Deepfakes (Voice cloning)

Cybercriminals create voice messages resembling a particular person’s voice to carry out vishing attacks, create negative publicity for a political leader/celebrity, or impersonate executives in phone calls.

- Video Deepfakes

These are fake videos depicting a real person doing or saying things that they actually never did. Deepfake videos are mostly used for fraud or running misinformation campaigns.

- Fake Images

It is the easiest media type to create, which can be used for identity theft, fake profiles, or damaging reputations.

- Real-time Deepfakes

Attackers often use this type of deepfake during video calls to bypass identity verification and impersonate legitimate users.

What Do Attackers Use Deepfake For?

Here are some common examples of how cybercriminals use deepfake technology for different malicious purposes:

- Fraud by impersonating an executive

Often, cybercriminals use deepfake to impersonate CEOs or senior leaders and trick employees into transferring money urgently or sharing confidential data

- Business email compromise (BEC) attacks

Along with phishing emails, attacks employ deepfake audio or video to make fraudulent requests

- Financial fraud and identity theft

Cybercriminals can also easily bypass biometric authentication systems like voice recognition/facial recognition through deepfakes

In an example, PwC noted, an employee at a multinational engineering firm approved a transfer exceeding $25 million after joining a video call in which every participant, including a senior executive, was a highly convincing deepfake impersonation.

- Disinformation and manipulating political views

This is one of the easiest ways to spread false information and influence public opinion. It can influence elections, damage reputations, and cause reputational damage

- Social engineering attacks

Deepfakes can make social engineering attacks easier, as they enable attackers to impersonate individuals, create realistic social media profiles, and manipulate targets to share confidential information

How to Detect Deepfake Attacks?

Though deepfake AI detection can be challenging, thankfully, there are ways to detect and prevent them before they cause significant damage.

Some obvious signs of deepfake content are:

- Unnatural facial expressions and eye blinking

- Lip-sync doesn't match

- Lighting and shadows vary abnormally

- Unnatural and robotic tones

- Unusual behavior, etc.

No matter how advanced AI technology gets, creating a perfect deepfake is nearly impossible. By looking at these signs, people can identify and save themselves from deepfake scams.

How to Prevent Deepfake Attacks?

Organizations can use the following methods to keep their assets secure from deepfake attacks:

- Implement Multi-Factor Authentication (MFA)

It adds an extra layer of security and does not rely solely on voice or video verification

- Establish Clear Verification Protocols

Organizations must mandatorily have a secondary confirmation method, such as callback verification or approving workflows

- Conduct Cybersecurity Awareness Training

Organizations should also regularly conduct a cybersecurity awareness training program to educate employees on best cybersecurity practices, password hygiene, and how to detect/prevent deepfake and social engineering attacks

- Adopt Zero-Trust Security

This approach enforces continuous authentication and authorization, operating on a zero-trust model where no user, system, or device is inherently trusted. Every request is verified, without exception.

Learn more about this technique in the detailed article Strengthening Enterprise Security with a Zero Trust Approach, where you can understand how this technique works and how organizations can implement it.

- Use a Deepfake Detection Tool

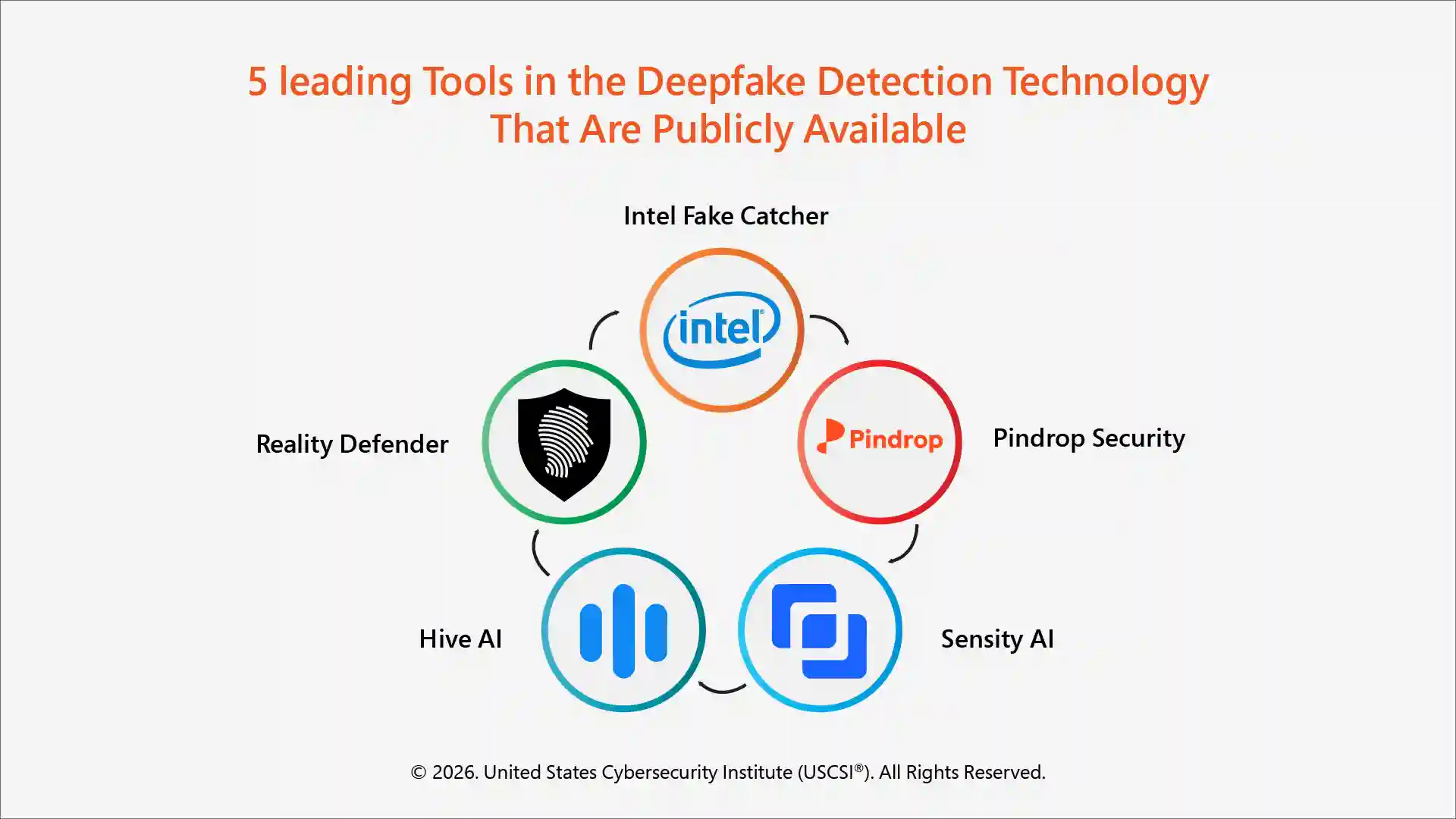

There are many tools for detecting deepfake AI that use AI and machine learning in cybersecurity and can also help identify and flag suspicious media.

Some popular publicly available deepfake detection technologies are shown below.

Final thoughts!

Deepfakes represent a serious cyber threat, using AI and social engineering to manipulate and exploit human trust. From executive fraud to large-scale misinformation, their impact can be huge and growing.

Therefore, organizations must take the necessary steps to effectively detect and prevent deepfake attacks. Organizations must adopt the latest tools and technologies along with proper cybersecurity awareness and strict verification policies to safeguard their critical digital assets.

With USCSI® cybersecurity certifications like CCC™ and CSCS™, professionals can learn how to use AI and machine learning in cybersecurity to keep their assets protected not only against deepfake attacks but also against other AI-powered evolving and emerging cyber threats too.